Prospective vs Retrospective

The quiet difference between two kinds of validation studies decides whether a healthcare AI product will work in the world. Most published claims live on the wrong side of it.

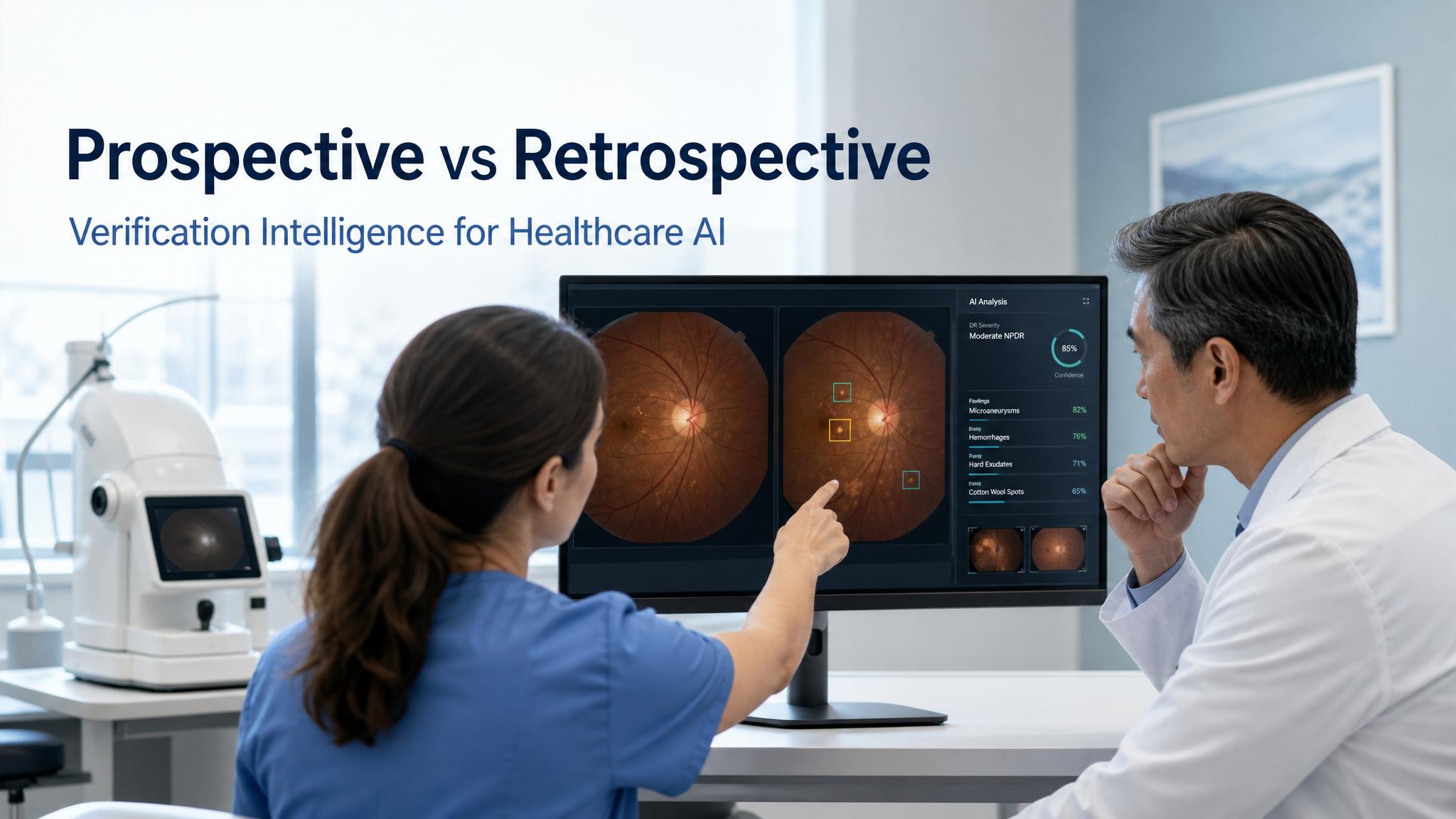

In November of 2018, a small team from Google Health and the Thai Ministry of Public Health installed a deep learning system in eleven primary care clinics across the provinces of Pathum Thani and Chiang Mai. The system, developed by some of the most accomplished computer vision researchers in the world, had been tested on retrospective datasets of retinal photographs and achieved an accuracy that the team’s published work described as comparable to retina specialists. On a fundus image of an eye affected by diabetic retinopathy, the algorithm could, in laboratory conditions, identify the disease with accuracy exceeding ninety percent, returning a result in under ten minutes. The hope, written into the deployment, was that the algorithm could compress a screening pipeline that, under the existing system in Thailand, could take ten weeks from photograph to specialist read into a near instantaneous primary care decision. Diabetic retinopathy is the leading cause of preventable blindness in adults. Thailand had four and a half million patients with diabetes and roughly two hundred retina specialists. The math, on paper, made a case the team found compelling.

Over the following nine months, the team observed what actually happened. A subsequent paper by Emma Beede and colleagues, published at the ACM CHI conference in April of 2020, documented the gap with unusual transparency. The system rejected roughly twenty one percent of the nearly eighteen hundred fundus images nurses captured during the deployment as too low quality to interpret. The rejection rate, in lab conditions, had been a small fraction of that. The cause, the team determined, was clinic lighting. The fundus cameras the lab had used were positioned in controlled imaging suites with the ambient light optimized for retinal photography. The cameras in Thai primary care clinics sat in rooms with whatever lighting the room had, and the resulting images, while perfectly adequate for the nurses’ own assessment, fell outside the input distribution the algorithm had been trained on. Internet connectivity at several sites was slow enough that an image upload that took seconds in the lab took minutes in the clinic. Nurses, in practice, screened roughly ten patients per two hour session, well below the throughput the workflow had been designed around. Some nurses began discouraging participation entirely, on the grounds that the system was creating bottlenecks rather than relieving them. Patients flagged as positive were referred to specialists who, in some cases, found nothing referable.

None of this was a story of failed artificial intelligence. The algorithm was good. The retinal photographs the algorithm could read produced results that, by the time the more rigorous follow up study was published in The Lancet Digital Health in 2022, demonstrated specialist level diagnostic capability across nine primary care sites with 7,940 enrolled patients. The story is that the gap between the lab and the clinic was not, in the way the team had expected, about the algorithm. It was about everything the algorithm had not been trained on. The lighting, the cameras, the internet, the workflows, the patient flow, the implicit assumptions about who would be present at the screening, and the implicit assumptions about what data quality looked like in the clinic. The retrospective validation had answered one question with sophistication and care. The prospective deployment was answering a different question that the retrospective validation could not, by its nature, have answered.

This piece is about that gap. It is the gap that decides whether a healthcare AI product, regardless of how well it performs in the published literature, will actually work in the world. It is one of the most consistent predictors of healthcare AI failure, and one of the least understood patterns in the popular coverage of the field. The reader who learns to distinguish a retrospective validation study from a prospective deployment trial, and to ask the right questions when only one of the two has been done, will see a different shape of the field than the field’s marketing departments tend to project.

What the two words mean

In medicine, a retrospective study looks at data that has already been collected, often for some other purpose, and asks questions of it after the fact. A prospective study, by contrast, defines its question in advance, recruits participants going forward, and follows them through a planned protocol. The distinction is foundational in clinical epistemology because the two kinds of studies answer related but importantly different questions. Retrospective studies can find associations in data that already exist. Prospective studies can test whether an intervention works under conditions controlled to support that test.

In healthcare AI, the same distinction maps onto two recognizably different stages of validation. The first stage, retrospective validation, runs the algorithm on a dataset that has already been collected, often the same dataset family on which the algorithm was trained. The dataset is split into training and test partitions, the algorithm is trained on one, evaluated on the other, and performance metrics are reported. This is the work most published healthcare AI papers describe. It is the foundation of every FDA 510(k) submission, the substance of most validation claims on company homepages, and the source of most of the dramatic accuracy numbers that travel through the press. It is also, on its own, an unreliable predictor of how the algorithm will perform in deployment.

The second stage, prospective deployment validation, runs the algorithm forward in time, in the actual setting where it will be used, on patients who present in the ordinary course of clinical operations, and measures clinical outcomes that matter to patients and clinicians. This is the work that healthcare AI startups talk about in pitch decks more often than they fund. Prospective deployment studies are expensive, slow, and risky for the developer. They sometimes fail. When they succeed, they are far more meaningful evidence than retrospective validation, because they answer the question that retrospective validation cannot answer: whether the algorithm works in the conditions where it will actually be used.

The healthcare AI field has, in the seven years since prospective deployment trials became a topic of serious methodological attention, developed standards for how to do them. The CONSORT-AI and SPIRIT-AI extensions, published simultaneously in Nature Medicine, The BMJ, and The Lancet Digital Health in September of 2020 by Xiaoxuan Liu, Samantha Cruz Rivera, and a large international consortium, lay out reporting and protocol standards for AI clinical trials. The DECIDE-AI guidelines, published in 2022, address the early stage clinical evaluation phase that sits between technical validation and randomized trial. The IDEAL framework, originally developed for surgical innovation, has been adapted for AI deployment. These standards are useful. They are also, in current practice, observed more often in the breach than in the keeping.

Four mechanisms that close the lab and open the clinic

The gap between retrospective validation and prospective deployment is not a single phenomenon. It is a set of four overlapping mechanisms, each of which can cause an algorithm that looks excellent in retrospective testing to fail in real use. The careful reader of healthcare AI claims should be able to recognize each of them.

Data leakage and label leakage

The first mechanism, and the one most likely to produce dramatic performance overstatements, is leakage. Data leakage occurs when information from the test set, the data on which the algorithm’s performance is being measured, has somehow influenced the training. This can happen in obvious ways, such as patients appearing in both the training and test partitions, and in subtle ways, such as image preprocessing pipelines that were tuned on the entire dataset before the train and test split was made. Label leakage occurs when the algorithm has access to information that would not be available at the moment of clinical decision. The Epic Sepsis Model, which this publication has written about in earlier pieces, was found to be using the administration of antibiotics as one of its predictive features. Antibiotics are something clinicians order when they have already begun to suspect sepsis. The model was, in a sense, detecting the doctor’s suspicion. The leakage produced a retrospective performance number that overstated the model’s actual prospective utility, because in deployment the antibiotic signal would only fire after the clinical insight the model was meant to provide had already occurred.

The reader’s question, when encountering any retrospective validation claim, is what features the model uses to make its prediction and whether any of those features would, at the moment of clinical decision, be downstream of the very judgment the model is meant to help with. A model that predicts hospital readmission using data from the day of discharge is doing something different from a model that uses data from the day of admission. A model that detects cancer using a pathology image with the cancer already marked by a previous reader is doing something different from a model that detects cancer in unmarked images. The questions are not always easy to ask. The methodological literature is full of cases where they were not asked, and where the resulting performance numbers, often impressively high, did not survive contact with prospective deployment.

Site-specific signals

The second mechanism is the one Zech and colleagues at Mount Sinai documented in a 2018 PLOS Medicine paper that has, in the years since, become a foundational reference in the methodological critique of healthcare AI. The team trained convolutional neural networks to detect pneumonia on chest radiographs drawn from three institutions: the National Institutes of Health Clinical Center, Mount Sinai Hospital, and the Indiana University Network for Patient Care. The models performed well on data from the institutions on which they were trained. Performance dropped, sometimes substantially, when the models were evaluated on data from institutions they had not seen during training. The mechanism, which the team probed directly, was striking. The models had learned to identify the originating hospital itself with near perfect accuracy: 99.95 percent for NIH and 99.98 percent for Mount Sinai. They had not, primarily, learned to identify pneumonia. They had learned to identify the hospital, and the hospital was correlated, in the training data, with the prevalence of pneumonia.

This is a structural problem with retrospective validation in healthcare AI, and it has held up across a decade of follow up work. Radiological images from different institutions differ in ways the human eye does not register but a convolutional network reliably can: scanner models, image processing pipelines, compression algorithms, technologist habits, patient positioning conventions, and the markings that radiology departments add to images for their own workflow purposes. A model trained on data from one set of institutions will, predictably, pick up these institutional fingerprints and use them as predictive features. The model’s apparent accuracy on held out data from the same institutions reflects this. The model’s actual accuracy on new institutions does not.

The Zech finding generalizes. The Epic Sepsis Model performed differently across hospitals to a degree that, by the time later analyses spanning more than 800,000 patient encounters had been published, the manufacturer had moved to recommending site specific retraining before clinical deployment. Pathology models trained on slides from one laboratory perform differently on slides from another. Cardiology models trained on ECGs from one device manufacturer perform differently on ECGs from another. The pattern is now well established. Retrospective validation that does not include external testing, ideally at sites that did not contribute to the training data at all, is not a reliable predictor of deployment performance. The reader’s question, when encountering any retrospective validation claim, is where the test data came from and how it relates to the training data. If the answer is that the test data came from the same institutions, the same scanners, or the same data collection pipeline as the training data, the validation has answered a much narrower question than the marketing implies.

Featured Partner

Invest in the Infrastructure Behind Modern Medicine

As healthcare expands beyond hospital walls, the buildings and campuses supporting that shift are generating compelling returns for investors who move early. The Healthcare Real Estate Fund offers qualified investors direct access to a curated portfolio of medical office, outpatient, and specialty care facilities.

Learn More →Distribution shift in deployment populations

The third mechanism is distribution shift. Healthcare AI models, like all statistical models, learn the patterns present in their training data. When deployed in a population whose distribution differs from the training distribution, performance can degrade in ways that the developers may not anticipate.

Distribution shift in healthcare comes in several flavors. The training cohort may be drawn from a tertiary academic medical center and the deployment may be in community primary care, with the obvious implication that the prevalence and presentation of disease will differ. The training cohort may overrepresent certain demographic groups and the deployment may include populations the model has seen less of, with associated implications for model accuracy across those groups. The training data may have been collected during one season, era, or stage of a disease’s natural history, and the deployment may occur under conditions that have meaningfully changed.

The COVID-19 pandemic, while atypical, produced an instructive case at scale. Many predictive models trained on data from before the pandemic began performing differently on patients seen during it, because the underlying population, the comorbidity patterns, the care utilization patterns, and the available treatments had all shifted. Models that had been validated on retrospective pre-pandemic data and deployed in pandemic conditions were, in many cases, no longer well calibrated to the population they were now seeing. This is the same mechanism that produces gradual performance decay in deployed clinical AI models over time, sometimes called drift, and it is one of the principal reasons that prospective deployment monitoring, not just one time prospective validation, is part of any responsible healthcare AI deployment.

The reader’s question, when encountering a retrospective validation claim, is whether the validation population matches the deployment population in ways that matter. A model validated on academic medical center patients and deployed in rural primary care has not, in any meaningful sense, been validated for that deployment. The marketing language may not make this distinction. The methodological responsibility for noticing it falls on the reader, the procurement officer, the clinician, and ultimately the patient.

The socio-environmental layer

The fourth mechanism is the one the Google Thailand deployment surfaced with the most clarity. Retrospective validation, by its nature, isolates the algorithm from the conditions under which it will be used. The retrospective dataset is curated, the image quality is controlled, the labels are consistent, the workflow is irrelevant because the algorithm runs on stored data outside of any clinical context. The prospective deployment puts the algorithm into a workflow with cameras the developers did not specify, lighting they did not control, internet they did not provision, nurses they did not train, patients they did not select, and time pressures they did not anticipate. A meaningful fraction of healthcare AI deployment performance is determined by these factors and not, primarily, by the algorithm itself.

The Beede paper documents the Thailand case in unusual transparency. Other deployments have run into related issues with less public reporting. Algorithms trained on high resolution images degrade when deployed with cheaper sensors. Algorithms trained on data collected under a careful protocol degrade when deployed in workflows where the protocol is approximated. Algorithms designed to assist clinicians degrade when deployed without the clinician training that the assistance presupposes. The category of failure is not algorithmic failure. It is socio-technical failure, and it is invisible to retrospective validation.

The DECIDE-AI guidelines, mentioned earlier, exist precisely to address this category. The framework defines a clinical evaluation phase between technical validation and randomized trial, in which the algorithm is deployed in a small number of clinical settings under careful observation, the failure modes are documented, and the deployment is iterated. This is, in the Google Thailand case, what the later Lancet Digital Health study by Paisan Ruamviboonsuk and colleagues represents. The system was deployed prospectively in nine primary care sites in Thailand’s national diabetic retinopathy screening program, with attention to image quality, workflow integration, patient education, and specialist over-read as a safety mechanism. The published results showed real time diagnostic capability comparable to retina specialists, with the explicit caveat that the socio-environmental factors had to be deliberately accounted for. The conclusion, in the authors’ framing, was that the algorithm could work in this setting, but only with the integration work the Beede deployment had shown was necessary.

What good prospective validation looks like

The reader who has absorbed the four mechanisms is in a position to recognize, with reasonable confidence, when a healthcare AI claim has been prospectively validated and when it has not. The signs of a real prospective deployment study are recognizable in the published literature. The study reports its protocol in advance, ideally with a preregistration on ClinicalTrials.gov or a similar registry. The protocol specifies the inclusion and exclusion criteria for patients and for input data, the primary and secondary outcomes, the analysis plan, and the conditions under which the algorithm will be considered to have succeeded or failed. The reporting follows CONSORT-AI for randomized trials or SPIRIT-AI for protocols. The endpoints are clinical, not analytical: the question is whether the algorithm changes what happens to patients, not whether the algorithm produces outputs that match a reference standard. The validation sites are explicitly different from the training sites, ideally in geographies, populations, and clinical workflows that test the algorithm’s generalization. The deployment is observed under realistic conditions, with documentation of failure modes, workflow disruptions, and clinician interaction patterns. The results, when they appear, are reported with their limitations explicit, and the authors are forthcoming about what the study did not test.

The IDx-DR diabetic retinopathy diagnostic system, which this publication has discussed in earlier pieces, is the canonical positive example. The pivotal trial that supported the FDA De Novo authorization in 2018 was prospective, preregistered, multicenter, and run in primary care settings. The reference standard was the Wisconsin Fundus Photograph Reading Center, an independent academic resource. The authors specified in advance the sensitivity and specificity thresholds the algorithm needed to meet, and the algorithm either met them or did not. This is what a well designed prospective AI trial looks like. It is also rare. Most healthcare AI products on the market in 2026 have not been validated to this standard, and the marketing claims about them should be read accordingly.

The reader’s method

A short working method, sufficient to operate with on most healthcare AI claims, follows from the foregoing.

When a healthcare AI product is described as clinically validated, ask first whether the validation was retrospective or prospective. The answer is often discoverable from the published abstract. Retrospective validation language emphasizes datasets, test partitions, AUCs, and reference standards drawn from existing data. Prospective validation language emphasizes enrolled patients, follow up periods, primary outcomes, and clinical sites.

When the answer is retrospective, ask where the test data came from. If it is the same institutions as the training data, the validation has answered a narrow question. If it is from genuinely external institutions, the validation is more meaningful but still does not answer the deployment question.

When the answer is prospective, ask what the endpoints were. A prospective study that measures only diagnostic accuracy is closer to retrospective validation than to a clinical trial. A prospective study that measures clinical outcomes, or change in clinician behavior, or patient experience, is doing the work that retrospective validation cannot.

When neither is available, the model is unvalidated in any meaningful sense, regardless of the marketing language. This is, in 2026, the situation for most healthcare AI products on the market. The reader who recognizes this distribution of evidence is operating with information that most consumers, most journalists, and many investors do not yet have.

Back to Thailand

The Google diabetic retinopathy system, as of the most recent published work, can be made to function in primary care settings in Thailand. The work to get it there was substantial. The team that did it earned, in this publication’s view, the credibility that a transparent prospective evaluation confers. Other healthcare AI products will not, in many cases, do the work, and the question of why they are described in identical terms to the products that have is one of the central reading challenges of the field.

The distinction between retrospective validation and prospective deployment is not a technical curiosity. It is the line between the question that has been answered and the question that has not. When the published evidence speaks only to the former, the marketing claim that speaks to the latter is, on the available evidence, unsupported. When the published evidence speaks to both, with the prospective work meeting current methodological standards, the marketing claim is operating on real ground. The reader who learns to ask which kind of validation is being described, and to recognize the answers in the published literature, has gained one of the most reliable tools in the verification intelligence toolkit. The work the reader will do with that tool, applied to claim after claim across the field, will produce a more accurate map of healthcare AI than the field’s own marketing has any incentive to produce.

This is what we mean, in this publication’s stance, by reading the evidence on its own terms. The two words at the top of this piece, prospective and retrospective, are a small distinction that determines a large fraction of what healthcare AI claims actually mean. We will return to them often.

Sources and further reading

Beede E, Baylor E, Hersch F, Iurchenko A, Wilcox L, Ruamviboonsuk P, Vardoulakis L. A human-centered evaluation of a deep learning system deployed in clinics for the detection of diabetic retinopathy. Proceedings of the 2020 CHI Conference on Human Factors in Computing Systems. ACM, April 2020. Ruamviboonsuk P, Tiwari R, Sayres R, et al. Real-time diabetic retinopathy screening by deep learning in a multisite national screening programme: a prospective interventional cohort study. The Lancet Digital Health. 2022;4(4):e235 to e244.

Zech JR, Badgeley MA, Liu M, Costa AB, Titano JJ, Oermann EK. Variable generalization performance of a deep learning model to detect pneumonia in chest radiographs: a cross- sectional study. PLOS Medicine. 2018;15(11):e1002683.

Wong A, Otles E, Donnelly JP, et al. External validation of a widely implemented proprietary sepsis prediction model in hospitalized patients. JAMA Internal Medicine. 2021;181(8):1065 to 1070.

Liu X, Cruz Rivera S, Moher D, Calvert MJ, Denniston AK; SPIRIT-AI and CONSORT-AI Working Group. Reporting guidelines for clinical trial reports for interventions involving artificial intelligence: the CONSORT-AI extension. Nature Medicine. 2020;26(9):1364 to 1374.

Cruz Rivera S, Liu X, Chan AW, Denniston AK, Calvert MJ; SPIRIT-AI and CONSORT-AI Working Group. Guidelines for clinical trial protocols for interventions involving artificial intelligence: the SPIRIT-AI extension. Nature Medicine. 2020;26(9):1351 to 1363.

The DECIDE-AI Steering Group. DECIDE-AI: new reporting guidelines to bridge the development to implementation gap in clinical artificial intelligence. Nature Medicine. 2022;27(2):186 to 187.

Abramoff MD, Lavin PT, Birch M, Shah N, Folk JC. Pivotal trial of an autonomous AI based diagnostic system for detection of diabetic retinopathy in primary care offices. npj Digital Medicine. 2018;1:39.

Heaven WD. Google’s medical AI was super accurate in a lab. Real life was a different story. MIT Technology Review. April 27, 2020.

For broader methodological context on AI model generalization, see also Recht B, Roelofs R, Schmidt L, Shankar V. Do ImageNet classifiers generalize to ImageNet? ICML. 2019.