What “Clinically Validated” Actually Means

A single phrase covers at least four different evidentiary realities. Learning to tell them apart is the first move in reading healthcare AI.

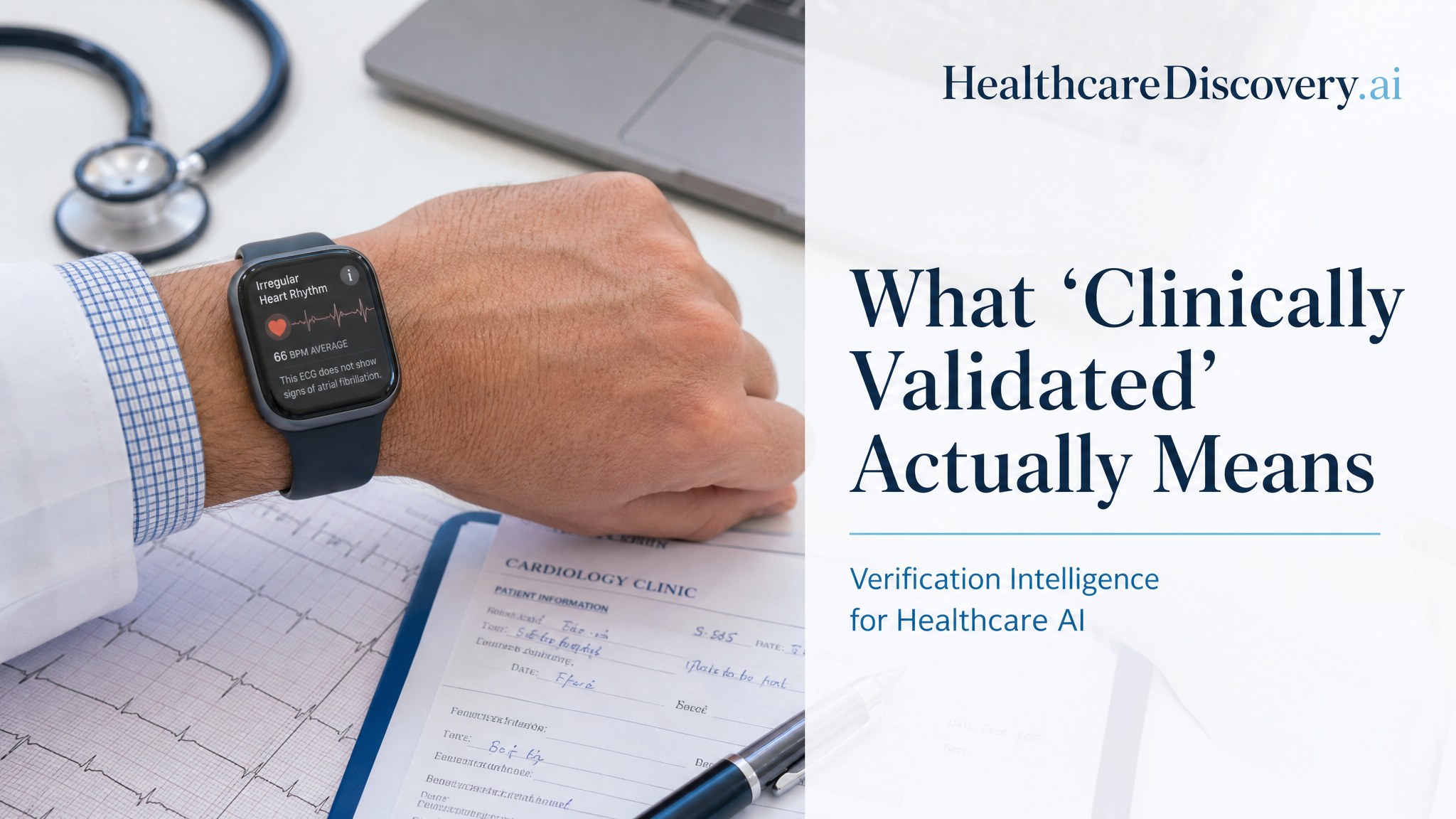

The notification arrives on the wrist at two in the afternoon. A small icon. A discreet phrase. Irregular Heart Rhythm Detected. The user, forty two, healthy, sitting at a desk in a software company, looks down. The watch, the user has been told in marketing materials and in news stories and in casual remarks from other people who own one, has been clinically validated. The watch, then, has detected something. The user puts down the laptop, picks up the phone, and starts looking for a cardiologist.

What the user has just experienced is a notification fired by an algorithm whose statistical properties are precisely characterized in the published literature, and almost entirely opaque to the person holding the device. The algorithm, the Apple Watch irregular pulse notification, was studied in one of the largest virtual clinical trials ever conducted, the Apple Heart Study, published in the New England Journal of Medicine in November of 2019. The study enrolled 419,297 participants over eight months. Among the small subset of participants who received an irregular pulse notification and then wore a follow up ECG patch, the algorithm’s positive predictive value for atrial fibrillation during simultaneous ECG monitoring was eighty four percent. That number is the source of most of the confident claims about the watch in popular coverage.

The same study contains other numbers, less often cited. Of the participants who received an irregular pulse notification, only thirty four percent had atrial fibrillation confirmed on the subsequent ECG patch when the entire monitoring period was considered. Only about half a percent of all participants ever received a notification at all, which reflected the study’s relatively young population, with a mean age of forty one. In a letter published in NEJM shortly after the original paper, an independent group noted that among 293,944 participants who completed the end of study survey, 3,474 reported a new atrial fibrillation diagnosis and only 404 of those, about thirteen percent, had received an irregular pulse notification. The study, the original authors acknowledged, was not designed to estimate sensitivity. Available data suggest it would not have been impressive.

This is not a story about Apple. The Apple Heart Study was a real piece of scientific work, conducted with serious collaborators at Stanford, published in a top tier journal, and reasonably described by everyone involved as a meaningful contribution to the field. The findings have been refined and extended in dozens of follow up papers. The story is about what happens between the published study and the phrase on the marketing page. Somewhere in the gap between positive predictive value of 0.84 in a population of motivated study participants with subsequent ECG confirmation and clinically validated, real information is lost. The user receiving the notification at two in the afternoon is operating with the marketing claim, not the published one. The cardiologist appointment may or may not be warranted. The user has no way to tell.

The phrase clinically validated appears on the homepages of healthcare AI companies, longevity products, supplements, screening tools, sleep trackers, blood pressure cuffs, continuous glucose monitors, and an increasingly long list of wellness devices that occupy the regulatory grey area between consumer electronics and medical instruments. It also appears in the press releases of FDA cleared diagnostic products and in the marketing copy of supplements whose manufacturers have never run a single randomized controlled trial. The phrase has no operational definition. It is, regulatorily speaking, marketing language. A reader who sees it has been given no information about what kind of validation occurred, on what kind of population, against what kind of comparator, with what kind of effect size, or under whose funding.

This piece is a working method for closing that gap. It walks through the four or five distinct things the phrase can mean, the questions a reader can ask to figure out which one applies, and the structural reasons the phrase persists despite obscuring more than it reveals.

Four meanings (at least)

Meaning one: FDA cleared via the 510(k) pathway

The most common, and most consequential, meaning of clinically validated in healthcare AI is that the product has been authorized by the FDA via the 510(k) pathway. This sounds reassuring. It is, in fact, a much weaker claim than most readers assume.

The 510(k) pathway, codified in 1976 and used today for the vast majority of medical device authorizations in the United States, requires the applicant to show that the device is “substantially equivalent” to a predicate device already on the market. It does not require the demonstration of clinical efficacy. It does not require a randomized controlled trial. It does not, in most cases, require any prospective clinical study at all. What it requires is analytical validation, often retrospective, showing that the new device’s outputs are sufficiently similar to those of an existing legally marketed device.

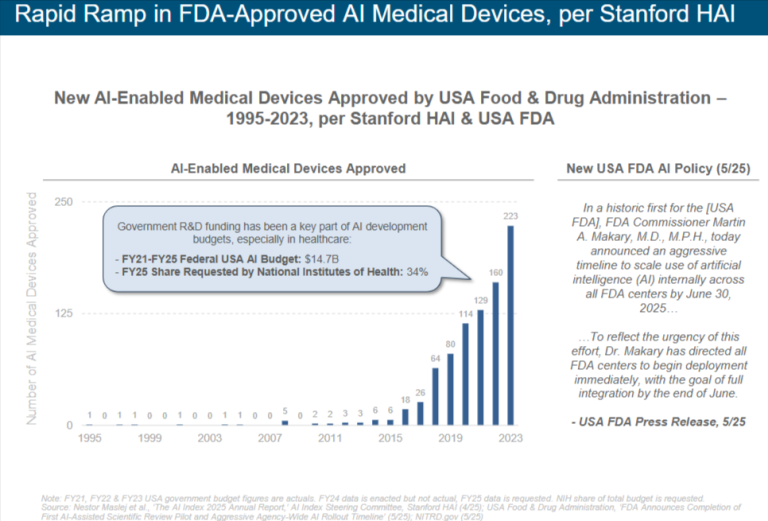

This is not a procedural footnote. It is the structural fact about almost all FDA authorized healthcare AI. In published analyses of FDA AI/ML enabled medical device clearances, between ninety four and ninety seven percent of authorized devices have come through the 510(k) pathway. A 2024 analysis of the 168 ML enabled Class II devices the FDA authorized in 2024 found that 94.6 percent of them cleared via 510(k), and only 29.2 percent reported both sensitivity and specificity in their public summary documents. Only 15.5 percent provided demographic data on the populations the devices were validated against. The remainder were authorized on substantial equivalence to a predicate, sometimes a predicate whose own clearance was based on substantial equivalence to an earlier predicate, in a chain that can stretch back decades before any actual prospective clinical work was done.

The implication for the careful reader is direct. FDA cleared means a regulatory body has determined that the product is similar enough to an existing product to be allowed on the market. It does not, in itself, mean that anyone has prospectively shown the product helps patients. That second claim, if true, is supported by separate clinical studies, which may or may not have been done, may or may not have been published, and may or may not have shown what the marketing implies.

Meaning two: Has appeared in at least one peer reviewed publication

A second common meaning of clinically validated is that the product, or its underlying algorithm, has been studied in at least one peer reviewed publication. This sounds substantial. It often is not.

A single peer reviewed publication can be a pilot study with twenty participants, conducted at a single center, by investigators who are themselves employees or consultants of the company. It can be a retrospective analysis of data the company already had. It can be a feasibility study designed to test whether the algorithm runs in a clinical environment, not whether it helps. It can be a poster abstract presented at a conference and indexed in the literature without ever surviving full peer review. All of these things count, in the language of marketing copy, as having been clinically validated.

This is not a knock on small early studies, which are how science is supposed to start. It is a description of how the language of those studies gets used. The reader’s question, when encountering clinically validated in the context of a publication, is the same set of questions a reviewer would ask: What was the sample size? Was the study prospective or retrospective? Who funded it? Who were the authors and what were their relationships to the company? Did the study test for the effect the product is now being marketed for, or for an upstream proxy? Were the comparators appropriate? Were the results consistent with the marketing claim? In most cases, walking through these questions resolves the ambiguity quickly. In a meaningful minority of cases, the published study would not survive contact with even the lightest external scrutiny, and the marketing claim depends on the reader never looking past the phrase itself.

Meaning three: Retrospective validation against a reference standard

A third meaning, increasingly common in healthcare AI, is that the algorithm has been retrospectively validated on a dataset, often the same dataset family on which it was trained, against a reference standard such as a radiologist’s read or a clinical outcome already recorded in the data. The model produces outputs. The outputs are compared to the reference. Performance metrics are calculated. The numbers go in the marketing deck.

Retrospective validation is essential work. It is the first step in any responsible model development pipeline. It is also the easiest kind of validation to game, intentionally or otherwise, and the kind most likely to overstate the performance the product will exhibit when deployed in a new environment. The reasons are well documented in the methodological literature on healthcare AI. Test sets drawn from the same site as training data inherit the site’s particular imaging equipment, patient demographics, clinical workflow, and labeling conventions. Models tuned on a clean retrospective dataset often degrade significantly when run prospectively on messier real world data. The Epic Sepsis Model, deployed across hundreds of hospitals on the strength of internal validation that produced an AUC between 0.76 and 0.83, performed at an AUC of 0.63 when externally validated by an independent group at the University of Michigan. The gap between retrospective and prospective performance is the gap most likely to determine whether a healthcare AI product is useful or harmful at the bedside, and clinically validated obscures the distinction entirely.

Featured Partner

Invest in the Infrastructure Behind Modern Medicine

As healthcare expands beyond hospital walls, the buildings and campuses supporting that shift are generating compelling returns for investors who move early. The Healthcare Real Estate Fund offers qualified investors direct access to a curated portfolio of medical office, outpatient, and specialty care facilities.

Learn More →Meaning four: Prospective deployment data

The fourth, rarest, and most meaningful version of clinically validated is that the product has been studied prospectively in the population it is meant to serve, ideally in a randomized controlled trial, ideally against a meaningful comparator, ideally with outcomes that matter to patients, ideally with independent investigators reporting the results. This is the version of validation that the lay reader is imagining when they see the phrase. It is also, across the universe of products that use the phrase, the least common version actually delivered.

Prospective clinical trials are expensive, slow, and risky from the developer’s perspective. They sometimes fail. The companies that have run them, and have positive results to show for the effort, tend to highlight those results prominently in their marketing, naming the studies, the journals, the sample sizes, and the effect sizes. The companies that have not run them tend to use the phrase clinically validated without further specification, on the assumption that most readers will not push past it. This pattern, that the specific claim is loud where it exists and the generic phrase substitutes where it does not, is one of the most reliable signals in the field.

Bonus meaning: Has clinical advisors

A meaning that is not really validation at all but borrows the language has become common in the longevity and wellness space. A product is described as clinically backed or clinically formulated or, with some creative use of marketing license, clinically validated, on the basis that the company employs or advises with a clinician. This is not validation. This is endorsement, or at most consultation. The reader who notices the difference between validated by a study and formulated with input from a clinician has caught the move. The two phrases are often deployed interchangeably, especially in supplement marketing, where the regulatory ceiling on health claims is set by the Dietary Supplement Health and Education Act of 1994 and the language of clinical research is borrowed without the structure of it.

Why the phrase persists

It would be possible to read all of this as a story about bad actors and weak regulation. That reading would be incomplete. The phrase clinically validated persists because it sits at the intersection of several structural pressures, none of which is going away soon.

The first pressure is the gap between what the FDA permits and what the marketing department wants to say. A 510(k) clearance allows a product on the market. It does not, in itself, allow the company to claim clinical efficacy beyond what was demonstrated in the clearance submission. But the line between cleared by the FDA and clinically validated is a line the marketing copy is incentivized to blur, and the enforcement apparatus that polices that line, the FDA’s promotional advertising review and the FTC’s consumer protection authority, is slower and less well resourced than the marketing teams it is meant to police. A 2023 systematic review of marketing materials for AI and ML enabled medical devices, published in JAMA Network Open, examined 119 FDA cleared devices and found that 12.6 percent had marketing claims discrepant from their FDA clearance and another 6.7 percent had claims the authors classified as contentious. The line is, in practice, blurred regularly.

The second pressure is consumer expectation. The buyer of a healthcare AI product, or a longevity supplement, or a sleep tracker, wants to know if the thing works. They are not equipped to read FDA clearance documents, parse peer reviewed studies, or evaluate the methodological strength of retrospective validations. They want a single phrase that tells them the product is real. Clinically validated fills that role. It signals to the consumer that the product belongs in a different category from the products that do not have the phrase, and it does so without requiring the consumer to do any further work. The fact that the phrase is essentially uncalibrated, that it covers a range of evidentiary states from rigorous prospective trial to single small retrospective pilot to no study at all, is invisible to the buyer at the moment of decision.

The third pressure is the journalistic chain we wrote about in this publication’s anchor essay. Press releases describe products as clinically validated. News articles, working from press releases under deadline pressure, repeat the phrase. Social media posts compress further. By the time the buyer encounters the product, the phrase has been used so many times in so many contexts that it has become invisible, a kind of background noise that signals legitimacy without conveying any specific information.

The fourth pressure, and the one most likely to grow in the coming years, is the increasing role of AI in medicine generally. A healthcare AI startup pitching to investors, to hospitals, or to consumers needs to say something about the science behind its product. Clinically validated, with its blurred boundaries and its veneer of scientific legitimacy, is the phrase that lets the company say something without committing to anything specific. As the field grows, the phrase grows with it.

What to ask instead

For the reader who wants to do better than the phrase allows, a working method is short enough to memorize. When you encounter a product described as clinically validated, run through the following sequence before drawing any conclusion about whether the product works.

First, ask which regulatory pathway, if any. If FDA cleared, was it 510(k), De Novo, or PMA? For non FDA regulated products, what is the regulatory category, dietary supplement, general wellness device, lab developed test? The pathway determines the evidentiary floor under everything else.

Second, ask for the specific study. The reader who asks “what study?” and gets a vague answer, a deflection, or a reference to the company website’s marketing page, has learned something important. The reader who gets a specific citation can keep going.

Third, ask whether the study was prospective or retrospective. In healthcare AI, this is the single most predictive question for whether a product will perform as marketed in deployment. Retrospective validation on training data tells you that the model fits the data it was built on. It does not tell you that it will work in your hospital, on your population, in your workflow.

Fourth, ask who funded the study, who the authors are, and what their relationships are to the company. Industry funded studies are not wrong. They are positioned, and positioning has predictable effects on results.

Fifth, ask about effect size in absolute terms. Improved detection by thirty percent is a relative claim. Detected three additional cases per thousand patients screened is an absolute claim. The two can describe the same finding and produce very different impressions of clinical relevance.

Sixth, and most importantly, ask whether the study tested the claim the marketing is making. A product may be clinically validated for one purpose and marketed for an adjacent purpose where no validation has been done. This move is so common in the consumer wearable space that it has become a routine pattern. A device cleared by the FDA for tracking heart rate at rest is marketed for atrial fibrillation detection during exercise. A device validated in adults is marketed for use in adolescents. A model validated in one institution’s data is marketed for general use across institutions. The phrase clinically validated travels easily across these boundaries because it does not specify what was validated, where, or for whom.

Back to the wrist

The user with the irregular heart rhythm notification, sitting at the desk at two in the afternoon, has none of this context. They have a phrase on a marketing page. They have a notification on a wrist. They have a vague sense that the device is medical, that a regulator has approved it, that a study at Stanford was published in a major journal, and that all of this adds up to a reason to call a cardiologist.

The cardiologist appointment may turn out to be the right call. Atrial fibrillation is a real condition with serious downstream consequences if missed, and a low yield screening tool that occasionally catches a real case is not, in itself, a failure. The question this piece is asking is not whether the watch should exist or whether it does any good. It is whether the user is operating with the information they need to make a good decision about what the notification means.

In the published study, the positive predictive value of 0.84 was measured under conditions specific to the study. The user’s actual probability of having atrial fibrillation, given a notification, depends on the user’s underlying risk, the duration of monitoring, the specific algorithm version running on the device, and a dozen other factors that the phrase clinically validated does not capture. The user does not need a graduate course in Bayesian statistics to be a more informed consumer of the notification. They need to understand that clinically validated is not a single thing, and that the responsible reading of any such phrase is to look past it for the specific claims underneath.

This is the literacy this publication exists to build. Clinically validated is the most common phrase in the healthcare AI marketing vocabulary, and the most easily misread. The reader who internalizes the four meanings, and runs through the six questions, will find that most of the field becomes legible quickly. The products with substantial prospective evidence will say so, in specific terms, with citations a reader can verify. The products with thin or absent evidence will use the phrase as a substitute for the specifics, and the substitution will be visible. The reader who has learned to see the substitution has gained a permanent piece of cognitive equipment.

The next time the notification arrives on the wrist, the reader will, at least, know what questions to ask before booking the appointment.

Sources and further reading

Perez MV, Mahaffey KW, Hedlin H, et al. Large scale assessment of a smartwatch to identify atrial fibrillation. New England Journal of Medicine. 2019;381:1909 to 1917. doi:10.1056/NEJMoa1901183

Campion EW, Jarcho JA. Watched by Apple, editorial. New England Journal of Medicine. 2019;381:1964 to 1965.

US Food and Drug Administration. Artificial intelligence and machine learning enabled medical devices, public list. Updated 2025.

US Food and Drug Administration. The 510(k) program: evaluating substantial equivalence in premarket notifications. Guidance document.

Lyell D, Wang Y, Coiera E, Magrabi F. Marketing and US Food and Drug Administration clearance of artificial intelligence and machine learning enabled software in and as medical devices, a systematic review. JAMA Network Open. 2023;6(6):e2321811.

Analyses of FDA authorized AI and ML enabled medical devices reporting that 94 to 97 percent of authorizations have come through the 510(k) pathway, including landscape papers in npj Digital Medicine, Electronics, and JACC: Advances, 2020 through 2025.

Dietary Supplement Health and Education Act of 1994 (DSHEA). Public Law 103 to 417.

For background on the regulatory framing of 510(k) substantial equivalence and the predicate device chain, see published analyses in JAMA, BMJ, and Health Affairs, 2018 through 2024.